Back in February, I created a short film and attempted to build an entire production pipeline from scratch.

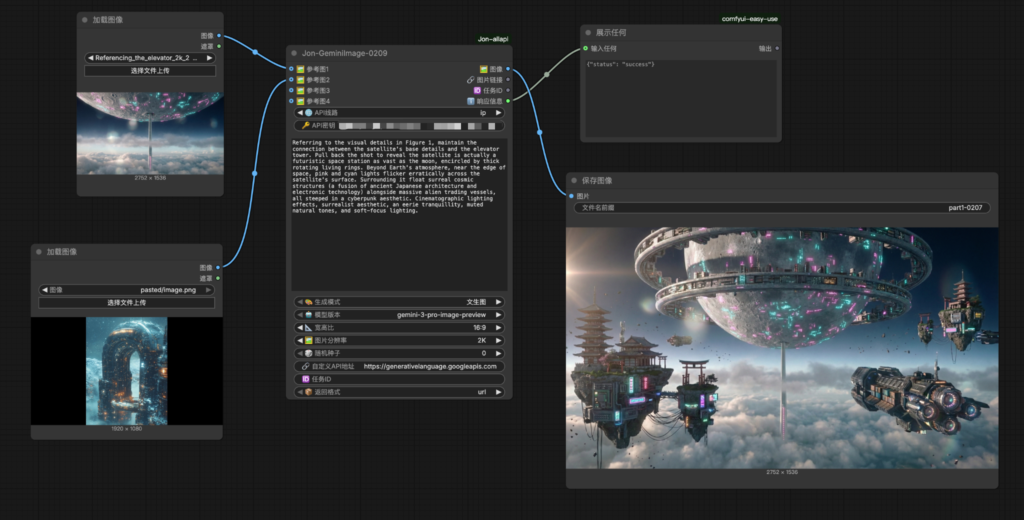

For the workflow, I used ComfyUI. I don’t believe it’s an obsolete tool; on the contrary, it’s an essential environment for building local workflows when you’re working with a limited budget. However, the efficiency of open-source models is heavily tied to GPU power, and their output often pales in comparison to the rapidly evolving closed-source models (for instance, just as I finished my video, ByteDance released Seedance 2.0). Consequently, I opted for a hybrid approach: by using custom ComfyUI nodes, I was able to call APIs for closed-source models to meet the high standards required for both image and video quality.

In terms of image generation, Gemini’s Nano Banana Pro was my primary choice, with ByteDance’s Seedream 4.5 (now updated to 5.1) as a backup. I remember the struggle in 2023—painfully running local models with Stable Diffusion and LoRAs, chasing “gacha-style” results through standardized prompts at a massive time cost.

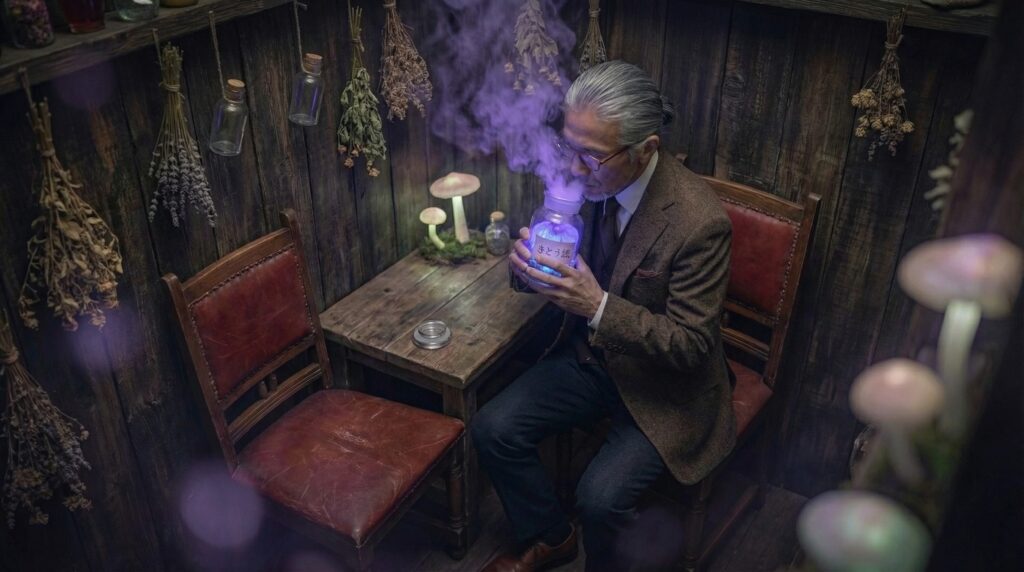

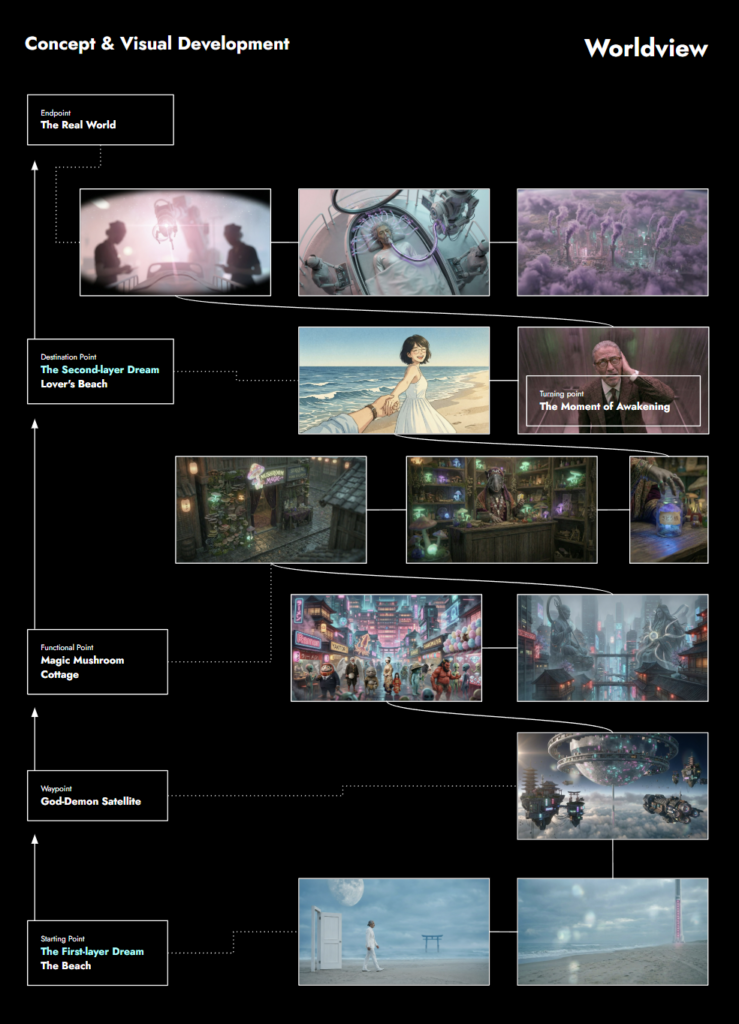

The short film is titled《Paradise Consumed》. The premise might sound a bit cliché: a century from now, as the human population dwindles, AI agents must operate autonomously to sustain the remnants of civilization. However, AI processing requires energy, so they develop a method to produce power by harvesting human dreams. I centered the story on an elderly Asian man, showcasing his quest for hope within his dreams and the pivotal moment of his awakening.

I don’t want to go into detail about every chapter; this was an experiment, and I don’t claim to be an artist or a director. What I want to share are the interesting observations that emerged from this framework.

As children, we learn about gods, demons, and spirits from stories. Back then, we didn’t realize how vast the world was or that every nation has its own concrete ideologies rooted in religion, mythology, and folklore. These ideologies become clearer when people yearn for them, but what does that clarity actually look like in one’s mind? When I set my own aesthetic standard, that standard is dictated by my own shallow self-perception—which is essentially no standard at all. But when a group of people shares a standard, like in folklore, it is dictated by universal cognition and historical records. That’s when a “standard” truly exists—for example, a Kappa cannot look like a robot, and Zhu Bajie (Pigsy) cannot be a monkey.The advent of AIGC has undeniably raised the bar for aesthetic standards. We find ourselves amazed by the exquisite character upgrades in short online videos. In this moment, the composition of our “standard” has shifted; it has become a blend of universal cognition + aesthetic homogenization. You don’t struggle to choose between a good apple and a rotten one; the dilemma only arises when you have two good apples. That is the problem we face today. When everyone becomes a producer of high-quality content, are the countless concrete ideologies in our minds still limited by our personal cognition? I fear that what AIGC brings is not “aesthetic democratization,” but rather the homogenization of ideology for the masses.

We’ve all watched countless classic films, but which ones actually stick with us? When I thought about it, the first thing that flashed through my mind was an online short called Lee’s Adventure—it was incredibly soulful and profoundly sad. Then A Chinese Odyssey came to mind—a pure tragedy hidden under a comedic shell. Do I just like sadness? Probably not. It’s just that I perceived sadness through my own lens; that sadness was generated from within me. I believe that is the magic of cinema: it allows everyone to see themselves without even realizing it. Siddhartha once said, “The others you see are actually yourself.” I only truly understood that a few years ago. That’s why I created an old man exploring his inner world (his dreams); from the beach to the mushroom house, everything is a manifestation of his own internal ideology and his human obsessions. In this world, everything is him.

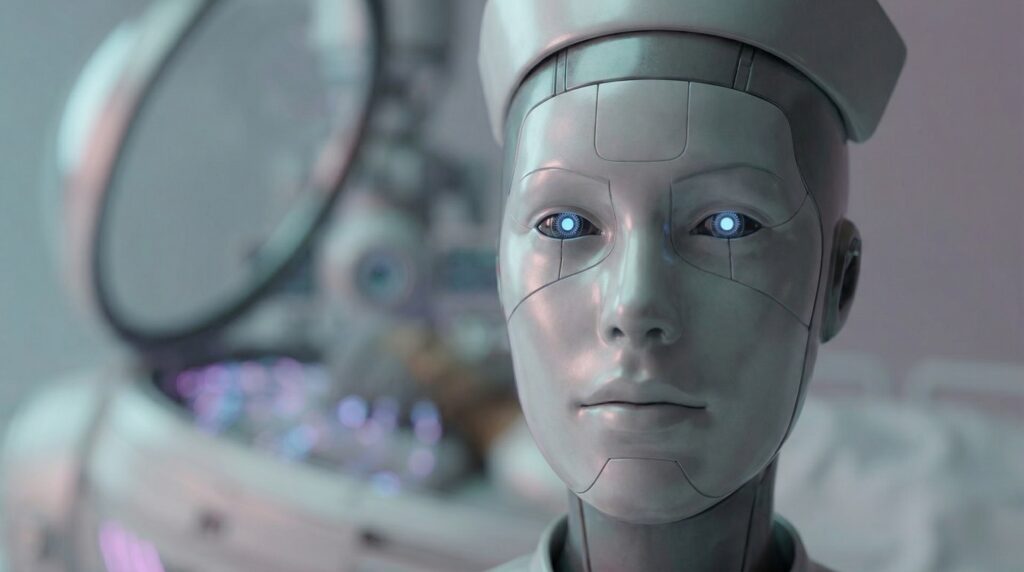

Humans always have exaggerated ideas about things they don’t understand, and the future is no exception. A decade ago, humans imagined AI in countless films, but who could have predicted it would turn out like this? What exactly is a Large Language Model (LLM)? I discussed this with my Gemini, and two explanations stood out:

1.It is dreaming in probabilities. When you ask it a question, it isn’t “thinking deeply” like a human. Instead, it relies on statistical intuition to find the most elegant, logical “word-linking” path, eventually “collapsing” into the version of reality that a human is most likely to present in that moment. it makes you believe what you want to believe.

2.It is merely a mirror—highly polished, made of silicon and code. It has no physical form, no soul, and no genuine emotional response to human fate or individual circumstances. The wisdom, humor, depth, or compassion that humans see in an LLM’s response is, in fact, entirely a reflection of the humans themselves.

Sometimes it feels like science and ideology are two ends of the same string. For example, Hinduism venerates cows. From the perspective of ecological economics, a cow’s value as a power source (plowing) and the continuous output of its byproducts (milk, and dung for fuel) in an agrarian civilization far outweigh the short-term gain of slaughtering it for meat. By not eating beef, they protected their tools of production, ensuring they were consumed in a more valuable way.

Please check out the full video below👇. Thank you for watching.